I published close to a hundred research papers, mostly in peer reviewed mainstream journals but at times also in some so called predatory journals. For me, particularly at this stage, all are interesting experiences in different ways. A simple thing I am going to do now is to look at the ‘objective’ measures namely citations per year and the impact factors of the journals in which they were published on the one hand; and my own perception and subjective judgment of the importance of that work on the other. Do the three have anything to do with each other?

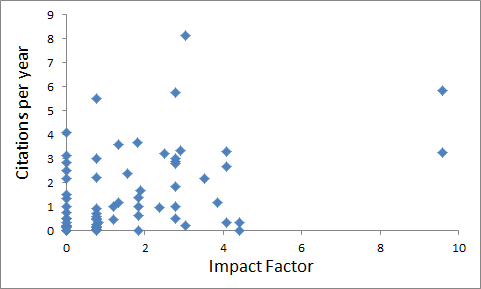

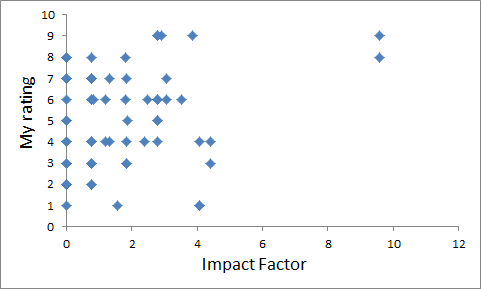

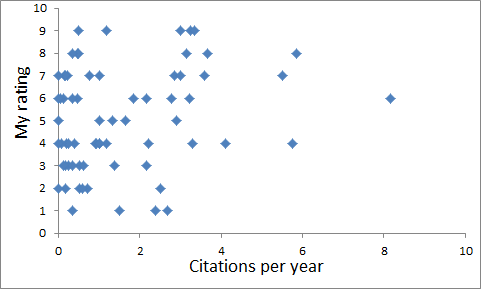

Here are the scatter diagrams of the three. I must state first that there were two outliers which are not included in these diagrams. My most cited paper, one with close to 1000 citations was published in 2001, making citations per year close to 50. It was published in a journals that has a an impact factor of 1.8 today and it has remained more or less the same over many years. The other extreme is another paper published in 1997 in a journal that has an impact factor of 59 today. But in 23 years, it was cited only 17 times. So the two extremes are actually upside down of the expected relationship, but let us leave them out being outliers. The rest of the papers make scatters that look like this. Needless to say they do not show any correlation with each other.

Relationship (or the lack of it) between the 2019 impact factor of the journal in which a paper was published and the number of citations received per year so far: The impact factors are based on the average number citations received by the papers in a journal and therefore at least a weak positive correlation is expected with the number of citations in the sample. But my sample does not show any. Nevertheless the average agrees because if a line with a slope of one is drawn, half of my papers lie above it and half below, more or less symmetrically.

A typical researcher thinks first of the highest impact journal in which a given manuscript is likely to get published, if it gets rejected there, he or she goes for a little lower one and so on until it finally gets published somewhere. I did the same at times, but not every time. Life is an opportunity to experiment and I experimented with my own papers in a variety of ways. This included publishing in some so called predatory journals too, and my experience there, particularly about the sensibility of reviewers’ comments, was not very different from high impact mainstream journals. No doubt, the degree of sophistication was different.

My own subjective ranking of my own papers is based on the novelty of the central idea, it’s relevance to fundamental science, its non-obviousness, logical soundness and clarity of the evidence. I made a conscious attempt not to factor in the importance given by others, the extent to which it helped my own career or that of my students. The lack of correlation with the number of citation perhaps indicates that what I thought was good science, others may not have found worth citing. On the other hand, when I looked at the papers that had cited ours, exceptionally few of them had cited them for the central argument in the paper. Often they were cited for supporting a statement that was never made in our paper. On a few occasions our paper was cited in support of exactly opposite view than the central argument of our paper.

About the number of citations received, in general, papers that more or less supported the beliefs prevalent in a field were highly cited. The ones that opposed the prevalent beliefs were not cited. This is important. There was no counter-argument ever. There was no debate, no criticism. People only seemed to ignore what they did not like. The quality of argument and evidence did not seem to matter much. This has been quantitatively the commonest response. But science does not progress by the response of the masses, I mean the researcher masses. It progresses by the outliers. Rarely someone sees a subtle point in what you wrote and appreciates, rethinks, introspects, at times attacks back, but everything in a very lively way. This is very rare, but science progresses by these rare interactions. I did experience such instances too and these are the true rewards in a scientific life. A rare, critical, agreeing or disagreeing but curious and interested response is what science progresses by, and this is not countable!! This is the reason why numerical indices might be useful in the business of a science career, but have nothing to do with science itself.