It is a common belief that immunity declines in old age. This is particularly relevant today because in the covid 19 pandemic, fatality in the elderly is considerably higher. People believe that this is because of decreased immunity in the old age. While some markers of immunity do go down with age, the question is whether old people are really more susceptible to infections? One needs to differentiate between intermediate markers and end points. Showing that a given treatment reduces cholesterol is different from showing that it actually reduces heart attacks. Similarly showing some antibody titres going down is different from actually showing increased frequency of infections.

A few years ago, we took up this question as a “katta” question. I had 4 summer students then and they were given this exercise of how to test whether markers like declining antibody titres could be equated to increased susceptibility to infection. Does the susceptibility to infection really go up? If yes, whether there are alternative interpretations to it, and how to differentiate between the alternative interpretations? An attractive looking alternative interpretation came up, which was that the microorganisms associated with the body over a long time, evolve to overcome the resistance mechanisms of the individual within the lifetime of an individual host. This concept is not new. Bill Hamilton used it in 1980 to develop his own hypothesis for the origin of sex. Vidhya, one of the summer students took the challenge seriously and not only came up with some testable predictions of the immunity decline versus pathogen evolution hypothesis but dug up some data and tested them. Summer trainees are there for a very short period and starting with development of a new idea they can rarely complete any piece of work. I think for them, the ability to generate a new idea is more rewarding than being able to complete a piece of work.

I like to talk about ideas at all stages of development to as many people as I can. In many institutes there is a culture of keeping your ideas secret until you complete your work and publish them. I think this might be a good strategy in places where there is an overall dearth of ideas. Working with young minds, I never experienced this state, so I talked about what Vidhya did, to many people around. Then Akanksha, who had done a lab rotation with me but had joined another lab that time, thought that it was worth pursuing the idea further. So she and Ulfat, my long time co-worker, did more systematic data search, involved Vidhya once again and started digging more data, analyze and test the alternative hypotheses across a wide diversity of pathogens. All this was not a priority work, so it took almost 5 years to complete and publish the work, which happened just over a few weeks ago.

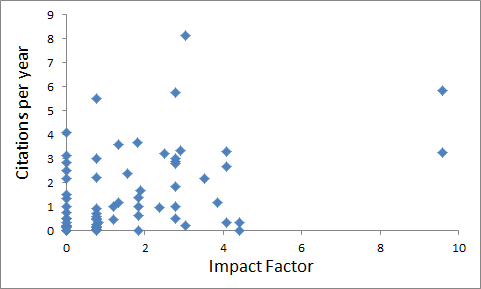

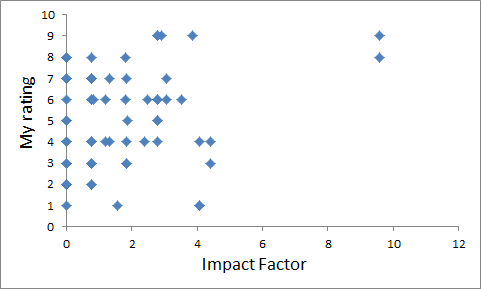

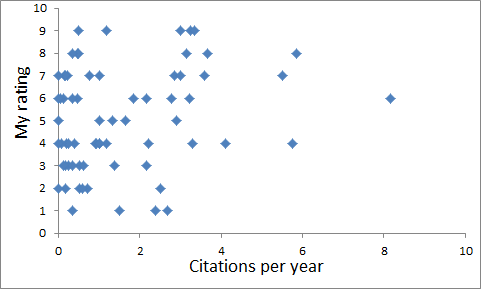

What the data essentially show is this (see figure). With age the incidence of all opportunistic pathogens increases substantially, often 10 to 30 fold. But the incidence of externally acquired infections, that is infections by pathogens which do not remain associated with the body over a long time, does not go up; on the contrary reduces with age. If there was a decline in general immunity, the incidence of all types of pathogens should have gone up, may not be to the same extent, but the direction should have been the same. But some infections appear to become more common and some substantially less common, and there is a clear demarcation. Those by opportunistic pathogens go up. Those by externally acquired pathogens go down or don’t change. This pattern is compatible with the pathogen evolution hypothesis and not very supportive of general decline in immunity.

There was another interesting pattern observed in the data. Although old people do not get outside infections more commonly, once infected, they are likely to collapse faster. This we think, is because of a general physiological decline, rather than an immunity decline. If it were immunity decline, it should have affected incidence as well as morbidity. But it does not increase incidence. Selectively increases morbidity and mortality among the infected ones. This indicates that the physiological ability to cope with infections declines with age, not the immunity. If this is true, what should matter more than age is the overall physiological fitness, not the number called age. In those data, we couldn’t test this because no index of general physiological fitness was available.

When we did this work, the current coronavirus pandemic was not even on the horizon. So obviously our paper does not have any data on it. Right from the beginning of the epidemic, people have been talking about old people being more susceptible. Now we have data on age specific incidence in India till date and it shows the same pattern. The incidence actually comes down in the older age classes. One possible reason might be reduced exposure but there is another interesting possibility which I am surprised, no one seems to be talking about. Old people, who have experienced a large diversity of viruses in their life time are more likely to have cross-immunity to a new virus. So old people are less likely to be affected by a new virus. But on the other hand, the case fatality does increase substantially with age, but that too in individuals who already show markers of physiological decline such as hypertension, diabetes, loss of cardiorespiratory fitness etc. This matches perfectly with our hypothesis.

Looking at patterns in data and making a hypothesis to explain that is one thing. And developing a hypothesis based on theoretical considerations first, raising alternative hypotheses simultaneously and then systematically looking at data to compare the alternative hypotheses is another. The level of scientific rigor in the latter is substantially higher. In published data, it is difficult to know the path. People may actually have followed the former but pretend the latter. But in this case we already published our hypothesis, along with supportive data. And now a new global picture emerged which complies with our hypothesis very well. This is heartening. The take home lesson is that for old age care, fitness matters more than actual age for so many communicable as well as non-communicable diseases. The exception is opportunistic pathogens. We cannot stop them evolving. So that effect of age is inevitable and so are those infections. But otherwise fitness is the key to overcome aging and age related diseases. In the long run, caring for the fitness of the elderly is a better approach than trying to protect them from external sources of infection.